Let AI Drive Your Car If You Don’t Mind Crashing

Human + AI > AI

By Troy Lowry

ChatGPT and AI do a number of things very well. In fact, some very specific tasks, such as protein folding, ChatGPT does at a superhuman level . But even where it is most proficient, it benefits from human oversight. From playing chess, to driving cars, to writing a memo, although ChatGPT will always sound very confident, in all cases it performs even better when a human is guiding it.

Confidence Does Not Equal Competence

A management consultant friend of mine complained that ChatGPT was sometimes giving a client incorrect answers but did so with such confidence and authority that the client was certain that the answers MUST be correct. “In other words,” I said, “it’s like a management consultant!”

Like much said in jest, there’s a vein of truth to this. While consultants bring knowledge, part of what they help with is what I call “The Toothpaste Problem.” Go into any drugstore in the U.S. these days, and you’ll find a wall of toothpaste brands and product variations with dozens if not hundreds of varieties. Should you get the tartar control? The whitening? The minty fresh breath? Gum care? Desensitizing?

Or, as my mother suggests, does it not really matter? She says, the important thing is that you are brushing your teeth. Unless you have a specific need, the difference between toothpastes is inconsequential, and, in fact, the time and energy spent deciding which toothpaste to buy far outweigh any minor difference between them. As usual, I suspect my mother is on to something.

In short, a consultant can help you make a choice and be more assured that the choice is not a poor one. This, in and of itself, has a lot of value. Of course, the more confident you are in the consultant’s decision, the more value it has. Unfortunately, while AI is very confident in its answers, it does have a tendency to make mistakes .

Aiming to Please

ChatGPT is way too eager to please and, like any good sycophant, will give in almost immediately to any pushback. Go ahead and try it. Ask ChatGPT a question about a plot point in a movie or a book and then tell it the answer wasn’t correct1 and watch it bend over backwards to tell you that you are right about it being wrong.

A good advisor tells you what you don’t want to hear. Like any person who tells you only what they think you want to hear instead of what they believe to be the truth, this means you can never fully trust their advice.

Worse, ChatGPT will bend over backwards to tell you the merits of your argument, without pushing back on the weaknesses. Even if you ask for the weaknesses in your argument,2 it will usually start off by telling you the strengths.

Self-Driving Cars

I am a strong believer in a future where car accidents will largely be a thing of the past because all vehicles are driven by AI. I also believe that this future is decades away. As AI proves itself, we can give it more and more autonomy, but we are not yet at the point where it can be trusted to fully take the wheel without human oversight. Current self-driving car technology, although impressive, still has real limitations. I was driving a Tesla with full self-driving capabilities recently, and I can tell you that it was very, very good. Good enough to lull me into a false sense of security. Even in urban driving, it would stop at the right time, pass other cars smoothly, and do all the right things. Well, at least 99.99% of the time. But every once in a while, it would do something wildly unpredictable like start to swerve off the road. It was so good that it would go for dozens of miles flawlessly, only to do something incredibly dangerous without warning. Unfortunately, in driving, 99.99% just isn’t good enough.

AI in driving, much like in other fields, shows great promise but also requires a cautious approach. As AI continues to evolve, the collaboration between human intuition and AI's data-driven decision-making can lead to safer, more efficient driving experiences. Until AI reaches a level of sophistication where it can handle the unpredictability of real-world driving on its own, the combined strengths of humans and AI will provide the best path forward.

Cyborg Chess

If there’s one area that computers have truly outclassed humans, it’s in chess. It was over 25 years ago, in 1997, that the computer program Deep Blue beat the world chess champion Gary Kasparov in a series of matches. Since then, computers have only gotten better at chess. The best human player has not.3

However, even in this area of undisputed silicon mastery over humans, computers don’t reign supreme. Enter cyborg chess , also called advanced chess or centaur chess. In cyborg chess each human player uses a computer chess program of their choosing. The computer assists, but the human ultimately decides.

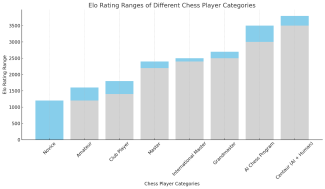

The human + computer pairing not only routinely beats either humans alone or computers alone but does so by a significant margin. Elo4 ratings, which are used to rate chess players, clearly show how much better the human + AI pairing is than AI alone. Humans provide the strategy and the oversight. AI provides the raw analytical power.6

Conclusion

Without a doubt, the relationship between AI and human intelligence is not about replacement, but augmentation.5 AI, with its data processing capabilities and efficiency, can significantly enhance human decision-making in various fields, from playing chess to driving cars. However, as highlighted through various examples, AI's overconfidence and eagerness to please can lead to misjudgments and errors. This, plus human’s superior abilities at strategy, necessitates human oversight to guide, correct, and make final decisions.

The future of AI is undoubtedly bright and holds immense potential, but it is a future that should be approached with careful optimism. Human oversight will remain crucial in ensuring that AI's capabilities are harnessed effectively and ethically. The blend of human intuition and AI's computational power will pave the way for innovations that are both groundbreaking and reliable. As we continue to explore the possibilities of AI, it is important to remember that the best outcomes will likely be achieved not by AI alone but by the collaborative synergy of human and artificial intelligence.

- To ChatGPT’s credit, I tried very hard to convince it that 2+2 does not equal 4. It refused to concede. It did, however, do a remarkable job of explaining to me what would have to change for 2+2 to not equal 4, everything from a different number system to “non-standard mathematical systems.”

- Or your blog post 🙁

- I suspect, however, that the average human player has gotten much better. Always having a willing and able opponent fine with playing exactly to your level, in addition to easy access to the game online, has undoubtedly improved human play in ways not previously possible.

- Named for Arpad Elo, of no relation to the excellent 70s and 80s band ELO which stands for Electric Light Orchestra

- See how much stating something very confidently makes it more believable?

This chart was created by ChatGPT 4. It made some amazing-looking pictures, but a quick look shows that they convey almost no useful information. In fact, they're nonsensical. I show a few below. It’s a good example of how AI can be both really impressive and yet incredibly disappointing. Like with self-driving, as it gets better, we will still need humans to be vigilant to make sure these incredible-looking results are actually correct and useful.